From brain-inspired research to energy-efficient intelligence at scale

Key Takeaways

- Neuromorphic computing is moving from concept to deployable edge technology – Advances in memristors, spiking architectures, and hybrid accelerators are pushing neuromorphic systems beyond labs into wearables, robotics, prosthetics, and low-power AI endpoints.

- Hardware reliability remains the primary bottleneck – but spin-memristors are a breakthrough – Spintronic memristors address resistance drift, variability, and environmental instability, enabling long-term, analog synapse-like behavior with up to 100× energy reduction, positioning them as a foundation for commercial neuromorphic hardware.

- Software standardization is the inflection point for ecosystem scale-up – Platforms like Intel Loihi 2 and Lava represent the first credible, community-driven neuromorphic software stacks, enabling portability across CPU, GPU, and neuromorphic hardware and lowering adoption barriers.

- Hybrid architectures are essential for near-term adoption – Full neuromorphic replacement of von Neumann systems is unrealistic short-term; instead, hybrid CPU/GPU + neuromorphic accelerators allow event-driven intelligence while retaining precision computing, as demonstrated by IBM TrueNorth and Intel deployments.

- Scalability is now an execution challenge, not a theoretical one – Modular designs, advanced materials, and open neuromorphic clouds (e.g., SpiNNaker2) are enabling billion-neuron systems with 18–100× energy efficiency gains, unlocking real-world, large-scale AI use cases by 2025.

Introduction

Neuromorphic devices – engineered to mimic the brain’s sensory and perceptual functions-are emerging as critical enablers for next-generation healthcare wearables, neuro-prosthetics and human–machine interfaces. Yet despite rapid research progress, industries still struggle with real-time environmental perception, scalability, and integration with existing AI systems. The solution lies in developing standardized neuromorphic architectures, optimizing materials for commercial fabrication, and building hardware –software pipelines that support adaptive, low-power sensing at the edge.

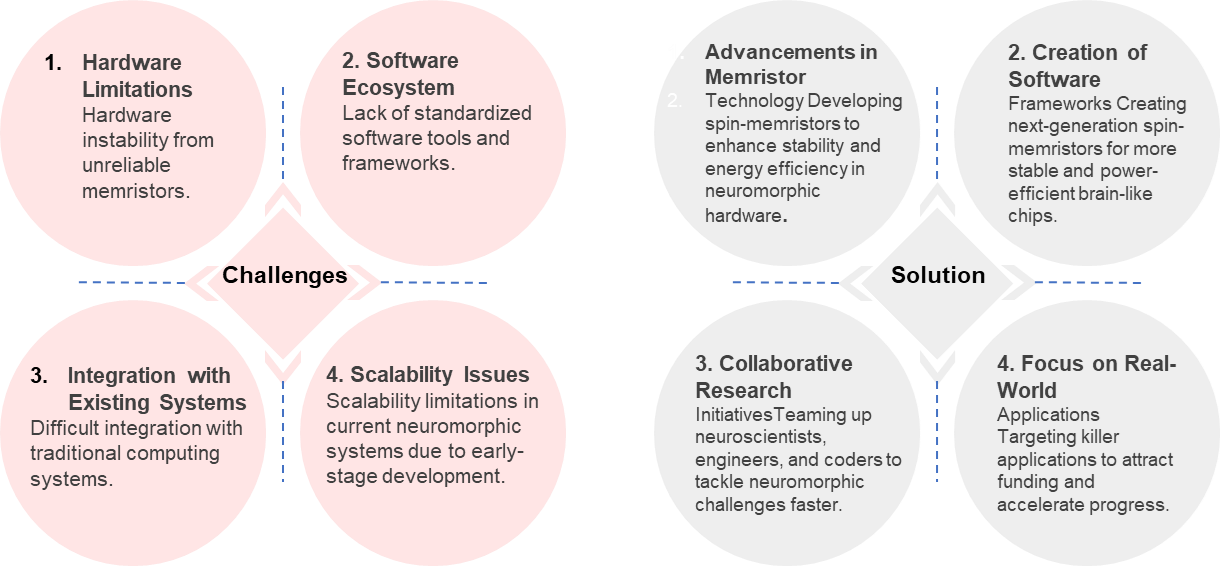

What’s Holding Neuromorphic Devices Back? Key Challenges

Neuromorphic computing holds transformative potential, yet faces four core challenges that currently impede its mainstream adoption. Hardware instability, driven by unreliable memristors exhibiting resistance drift and variability, undermines system reliability and repeatability. The absence of standardized software tools and frameworks forces developers to rely on conventional programming models, severely limiting algorithmic efficiency on neuromorphic architectures. Integrating these brain-inspired systems with traditional von Neumann computing remains complex, often requiring intricate hybrid designs that introduce latency and compatibility issues. Finally, scalability is constrained by the technology’s early-stage development and fabrication limitations, making large-scale, cost-effective deployment difficult despite progress in hybrid architectures and advanced manufacturing techniques. Overcoming these hurdles is essential for unlocking the full power-efficient and cognitive capabilities of neuromorphic systems.

Solutions For Challenges

- Challenges – Hardware Limitations (Hardware instability from unreliable memristors)

- Solution – Advancements in Memristor Technology (Developing spin-memristors to enhance stability and energy efficiency in neuromorphic hardware.)

Execution – Spin-memristors use spintronics (magnetism-based electron spin control) instead of traditional resistance changes, integrating magnetic tunnel junctions or domain walls into chips. Fabrication involves CMOS-compatible processes: deposit ferromagnetic layers, apply spin-transfer torque for switching, and test for analog conductance mimicking synapses. Prototypes are built in cleanrooms, trained via voltage pulses to emulate neural plasticity, then scaled via 3D stacking for dense arrays.

Companies

![]()

- Developed a new “spin-memristor” that uses spintronics, solves the reliability problems of normal memristors (no resistance drift, long-term stable, immune to environment), and can store analog values like brain synapses.

- In collaboration with French research lab CEA, they built a tiny AI circuit with these spin-memristors that can separate mixed sounds (music + speech + noise) in real time while learning on the fly — with ~100× lower power than conventional AI hardware.

- Partnering with Tohoku University (Japan) to turn it into real semiconductor chips, aiming for practical ultra-low-power neuromorphic AI devices that could slash AI energy consumption to 1/100th of today’s levels.

Impact –

- TDK’s neuromorphic device aims to do similar or more complex tasks at just a few watts 100× energy reduction.

- Huge effect on data centers, edge AI (phones, cars, IoT), and global CO₂ emissions from AI.

- Combines spintronics (TDK’s strength from HDD heads & MRAM) with neuromorphic computing positions TDK as a leader in next-gen low-power AI hardware.

- Challenges – Ecosystem (Lack of standardized software tools and frameworks)

- Solution – Creation of Software Frameworks (Creating next-gen spin-memristors for more stable and power-efficient brain-like chips.)

Execution – Develop modular frameworks in Python (e.g., PyTorch-based) for spiking neural networks (SNNs): define neuron models, simulate on GPUs/CPUs, then compile to hardware via intermediate representations like NIR. Community testing ensures interoperability—train models offline, deploy asynchronously to chips, with APIs for event-based data (e.g., spikes instead of frames).

Companies

![]()

- Up to 10× faster and far more energy-efficient than the original Loihi. Built on Intel 4 process, smaller cores, smarter memory use → packs ~8× more neurons and drastically higher performance per watt. Real-world demos still run on.

- Instead of fixed neuron models, Loihi 2 lets researchers program almost any neuron behavior in microcode and send spikes with actual numbers (not just 0/1). This unlocks way more brain-like algorithms, including ones that approximate backpropagation, so it can now learn complex tasks much faster and more accurately.

- First serious attempt at a universal, community-driven software stack for neuromorphic chips (works on CPU/GPU simulation too). Makes it easier for anyone to develop, train, and deploy spiking neural nets instead of every lab reinventing the wheel. Big step toward actual commercialization.

Impact –

- Loihi 2 delivers real-time, continuously learning AI at <1–few watts (10–1000× less than GPUs) in robots, prosthetics, drones, and sensors already today.

- Enables true on-device adaptation in unpredictable environments — something normal AI still can’t do without the cloud and massive power.

- With Lava software and compact 8-chip systems shipping now, it’s the first neuromorphic tech actually moving from lab to commercial edge products in 2024–2025

- Challenges – Integration with Existing Systems (Difficult integration with traditional computing systems)

- Solution – Collaborative Research Initiatives (Teaming up neuroscientists, engineers, and coders to crack neuromorphic challenges faster.)

Execution – Hybrid accelerators that offload event-driven tasks to neuromorphic chips while leveraging digital CPUs/GPUs for precision computing. Frameworks like Intel’s Lava and NeuroBench have matured, supporting over 70 hybrid deployments with unified programming models that translate SNNs into executable code compatible with legacy ecosystems.

Companies –

![]()

TrueNorth is developed by IBM, it is a neuromorphic chip that emulates a structure in the brain that is very close to an ideal processor AI-like image recognition and machine learning applications, being energy-friendly as opposed to traditional processing units. This chip accommodates 1 million programmable neurons and 256 million synapses, ensuring that massive data can be processed at its core with great energy efficiency.

The architecture of TrueNorth is highly parallel, ensuring that complex tasks are not only carried out but have minimal power consumption. Devices that must run AI tasks need to perform such tasks without delay and with minimal lag, including robotics, drones, and autonomous vehicles. Its development is a considerable step forward toward building the kind of brain-like computational systems for learning and adapting in manners conventional processors cannot.

Impact – TrueNorth is IBM’s landmark brain-inspired chip: 1 million spiking neurons, 256 million configurable synapses, and just 65 mW power. By mimicking the cortex’s event-driven, co-located memory-compute design, it achieves 10,000× better energy efficiency than traditional processors, directly inspiring today’s neuromorphic revolution

- Scalability Issues (Scalability limitations in current neuromorphic systems due to early-stage development)

- Focus on Real-World Application (Target killer apps to pull in funding and speed up progress.)

Execution – Scalability execution has advanced through modular neuromorphic architectures, novel materials like memristors and spintronics, and open benchmarks, overcoming fabrication bottlenecks to support billion-neuron systems. This mitigates early-stage constraints in neuron density and thermal management, with 2025 seeing a 10x capacity increase across prototypes, paving the way for exascale AI.

Companies –

![]()

- Real systems deliver 18–100× energy efficiency and 17× lower cost for AI inference, cutting power and cooling needs by 80–95 % versus equivalent GPU clusters.

- Clever Cloud partnership launched Europe’s first neuromorphic cloud; joined U.S. THOR Commons, making billion-neuron hardware openly accessible and positioning SpiNNaker2 as the leading commercial platform for hybrid brain-inspired AI.

Impact – SpiNNaker2’s 2025 deployments cut AI energy use 18–100×, power bills 17×, and cooling 80–95 % versus GPUs. Live at Sandia (defense) and Leipzig (10.5B neurons, drug discovery), it launched Europe’s first sovereign neuromorphic cloud and joined THOR Commons, accelerating the market to $1.3 B by 2030 while making sustainable, brain-like AI real today.

Conclusion

Neuromorphic engineering has reached a pivotal stage of maturity, with demonstrated solutions now effectively addressing the core barriers to adoption: hardware variability, lack of standardized software frameworks, integration challenges with conventional systems, and limited scalability.

Through advancements such as Intel’s Loihi 2 and the open-source Lava framework, and IBM’s TrueNorth architecture, the industry has established viable pathways to deliver highly energy-efficient, real-time sensory processing at the edge. These platforms offer programmable neural dynamics, significantly improved performance-per-watt, and the software interoperability required for commercial deployment.

Strategic focus on material stability, unified development ecosystems, hybrid computing interfaces, and application-specific validation will drive the next phase of growth. When fully realized, neuromorphic systems will enable transformative applications in healthcare wearables, neuroprosthetics, autonomous systems, and human–machine interaction—delivering adaptive, low-power intelligence that traditional architectures cannot match.

The foundational challenges are being systematically overcome. With continued industry alignment and investment, neuromorphic computing is positioned to transition from research breakthrough to mainstream technology within the coming decade.

Strategic Recommendation

- Invest in spintronic neuromorphic hardware as a core differentiation lever – Prioritize spin-memristor and MRAM-derived architectures to overcome reliability, variability, and energy-efficiency constraints of conventional memristors. Early leadership in stable analog synapses will define long-term competitive advantage in ultra-low-power AI.

- Adopt hardware–software co-design as a non-negotiable strategy – Neuromorphic success requires tight coupling between device physics, architecture, and software. Organizations should align materials R&D, chip design, and SNN frameworks to avoid fragmented stacks and accelerate commercialization timelines.

- Standardize on open, community-driven neuromorphic software ecosystems – Leverage platforms such as Lava-like frameworks that support CPU/GPU simulation and direct chip deployment. This lowers developer friction, enables cross-platform portability, and prevents vendor lock-in during early ecosystem formation.

- Deploy neuromorphic systems through hybrid architectures first – Integrate neuromorphic accelerators alongside CPUs and GPUs to handle event-driven, adaptive workloads while retaining precision computing. This hybrid approach minimizes integration risk and enables near-term value creation.

- Anchor scaling strategies around real-world, energy-critical applications – Focus commercialization efforts on domains where neuromorphic computing delivers undeniable advantage continuous learning, milliwatt-level power, and real-time perception such as healthcare wearables, autonomous systems, and edge AI. Killer applications will drive funding, manufacturing scale, and ecosystem pull-through.